I do my best to be self-aware, but it’s kind of hard to tell whether it’s working.

Self-improvement has always felt important to me. Growth mindset and all; if there’s something I can do to make myself a better person, why wouldn’t I do it? Maybe there are some improvements that wouldn’t be worth the difficulty, or that I’d have to put off for later, but I’d at least want to know about them.

So I try to self-assess regularly. But how does one judge their own judgements? I can’t rely on self-assessment to tell how effective my self-assessments are–that’s just pointing a mirror at itself. I need some evidence that comes from outside my own head.

I can (and do) ask my friends and family, of course, but although I can certainly count on them to be honest, I can’t count on them to be unbiased. Who else could I ask?

People who don’t know me very well? Less likely to be biased, but also less likely to have deep insights. Their opinions may be useful for ensuring I make a good first impression, but they’re not much help for deeper self-improvement.

Enemies? I’m sure I have at least a couple, but I don’t know who most of them are, and they probably wouldn’t want to give me any useful advice anyway.

What about former friends? This seems more promising. They liked me and knew me well at some point, but as time passes and we grow further apart, they’re less likely to feel the emotional attachment that leads to strong bias. On the other hand, that also means their insights might be out of date.

I think the best people to ask would be my exes.

In my case, I’m no longer close to any of my exes, so I wouldn’t be too worried about strong positive biases. In most cases, we parted on more-or-less good terms too, so I wouldn’t be that concerned about negative bias, either–but even if I were, criticisms from an ex have an interesting feature that makes them more valuable than feedback from a close friend or even an enemy.

In most cases, your ex isn’t likely to have known you for as long as your closest friends, and that means that whatever criticisms they may have are more likely to be based on things you actually did. This doesn’t guarantee they’ll be fair criticisms, of course! You don’t have to tell me that exes can harbor irrational grudges. But those grudges–especially if you were together for only a short while–are more likely to at least stem from things that actually happened, as opposed to the positive or negative traits people who’ve known you longer have inferred.

And there’d be other advantages, too: when you’re romantically involved with someone, they often see parts of yourself that no one else gets to see, not even your closest friends. (This can be especially true if you lived together.) My exes might have insights into parts of my character that nobody else has had a chance to judge.

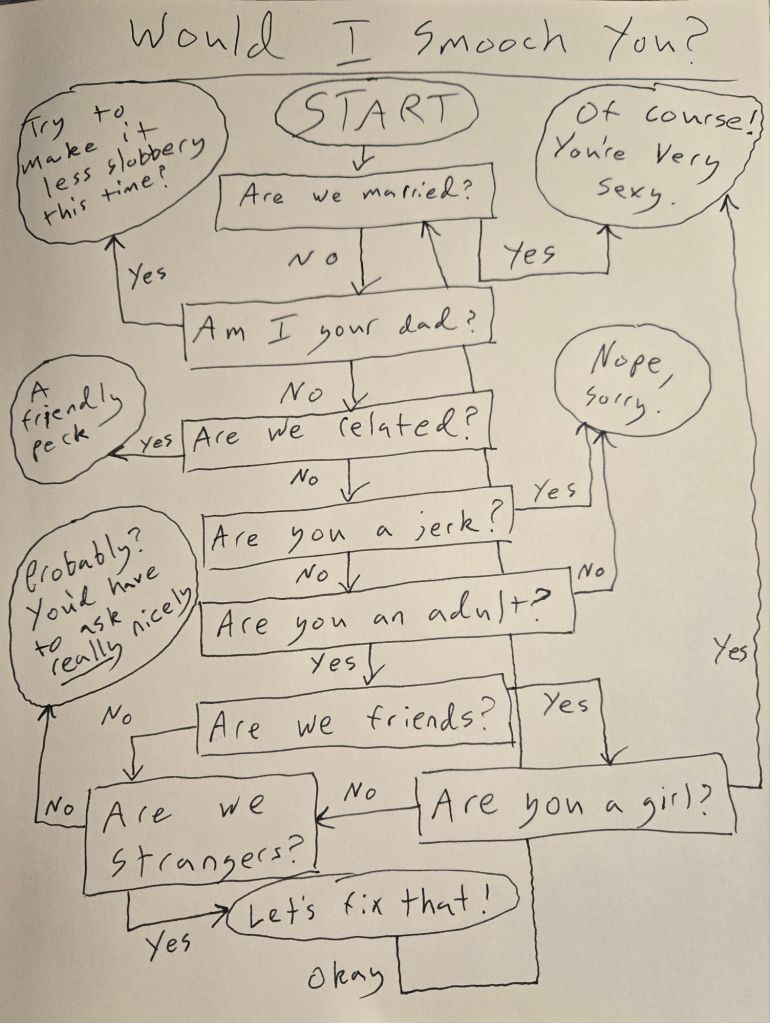

There’s just one problem with this idea:1 it would be really awkward to re-contact an ex just to ask for self-improvement advice. Maybe we could make exit surveys for relationships a thing? If any of y’all want to try becoming my ex, let’s test it out.

Oh, and if any of my actual exes happen to be reading this–what do you think?