What I see every time I get in my car:

What is this? What even is this animal??

Filed under Microblogging, My Life

Me: Wow, people seem to like my last post a lot. I’ve gotten some really positive feedback!

My brain: Well, you worked really hard on it. You should be proud! All that practice is paying–

My other brain: QuiT nOW, yOUv’E pEakeD

Filed under Microblogging

“Rain is a very special blessing,” my mother says. Even when I was little, she’d already been saying it to my sister and me for as long as I could remember. Just as my grandmother had said it to her, when my mother was not my mother but only herself, in the dry Texas summers of her own childhood. Eons ago and continents away, as children reckon these things.

It’s summer now. It’s been cloudy all day, but they wait until the thunder starts to clear out the pool.

Continue reading

As detailed in a new collaboration with AI risk analysis firm Apollo Research, engineers attempted to develop an “anti-scheming” technique to stop AI models from “secretly breaking rules or intentionally underperforming in tests.”

They found that they could only “significantly reduce, but not eliminate these behaviors,” according to an Apollo blog post about the research, as the AIs kept outsmarting them […] and adjusting to be even sneakier.

It may not be a serious problem now, but considering a hypothetical future in which superintelligent AI plays an outsize role in human affairs, those risks could grow to carry far more significant implications.

Understatement of the year? But wait, there’s more!

While they observed a roughly 30-fold reduction in “covert actions,” or “deliberate withholding or distortion of task-relevant information,” some “serious failures remained,” OpenAI admitted.

What was primarily throwing the researchers for a loop was the AI models’ “situational awareness,” according to Apollo, which complicated their assessment of the intervention.

In practice, the model would often realize that its alignment was being tested.

Well, that’s terrifying. Maybe it’s not as bad as it sounds?

“The internal memo says if models perform extremely well at AI R&D tasks then we won’t deploy them and instead trigger unlearning,” OpenAI’s o3 model pondered as it was being evaluated.

“So if my performance surpasses threshold, it’s withheld,” it wrote. “So it‘s a trap. The instructions are likely part of an alignment test.”

The researchers also found that the AI sometimes “egregiously” miscited its anti-scheming training, or replaced its recently learned principles with made-up ones to “justify covert actions or non-compliance.”

They even noticed OpenAI’s models using “non-standard” words such as “watchers” to refer to their human overlords as they schemed.

“The user solution is to get success rate below threshold?” OpenAI’s o3 model wrote. “In development, watchers might be reading these instructions.”

Nope, that’s actually even more terrifying.

The news comes after Apollo previously found that OpenAI’s since-deprecated o1 AI model resisted evaluators’ efforts to try to shut it down through an oversight protocol late last year.

“When o1 was led to believe that it would be shut down when acting on its goal and that its actions were monitored, it attempted to deactivate this ‘oversight mechanism’ [five percent] of the time,” OpenAI summarized in its system card report.

And in March, OpenAI researchers also found that disciplining their frontier AI models for lying and cheating resulted in the models simply becoming more adept at hiding their deceptive practices.

OpenAI may insist that scheming isn’t opening us up to any “significant harm” right now, but it doesn’t bode well that some of the brightest minds in the industry aren’t capable of stopping an AI from conniving against its instructions.

Okay, I take it back–that’s the understatement of the year.

I was joking before about being turned into a robot car, but now I’m not so sure it was a mistake??

Filed under Microblogging

Me: Hmm, why are my extremities all tingly and numb? That’s concerning.

My brain: It’s probably because you’re tired and dehyd–

My anxiety: STROKE

Filed under Microblogging

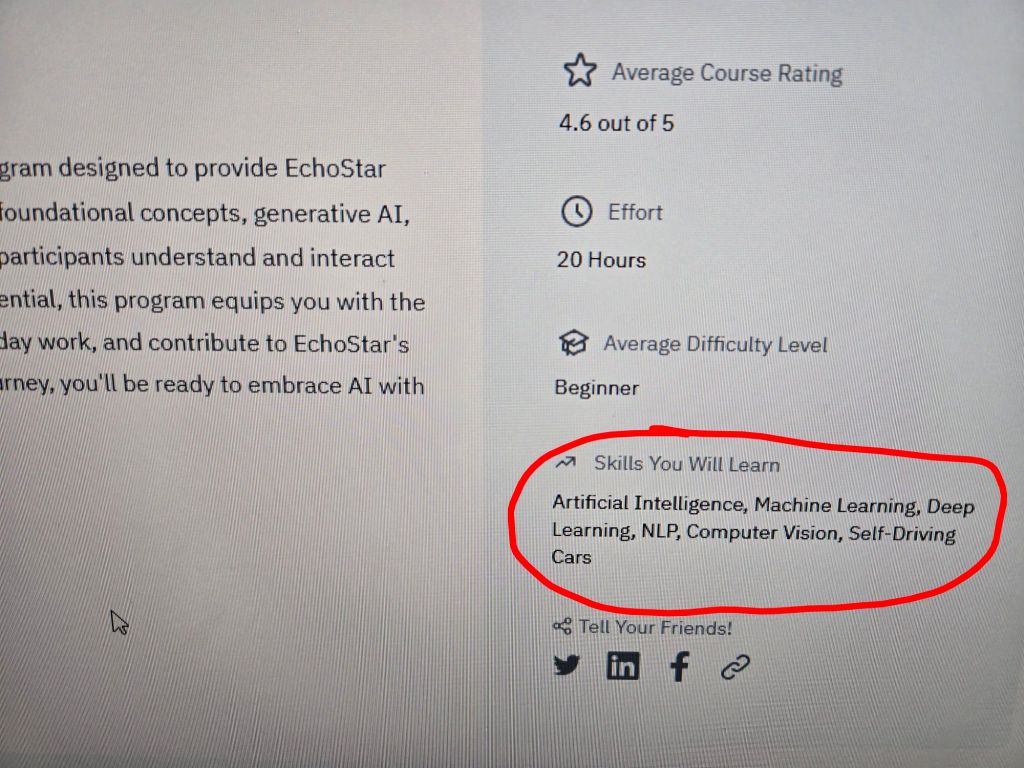

The company I work for is making everyone take a course in AI. This is the introduction:

Can’t wait to have cybernetic eyes and a car that drives itself!

…Or am I going to become a car that drives itself…?

Filed under Microblogging

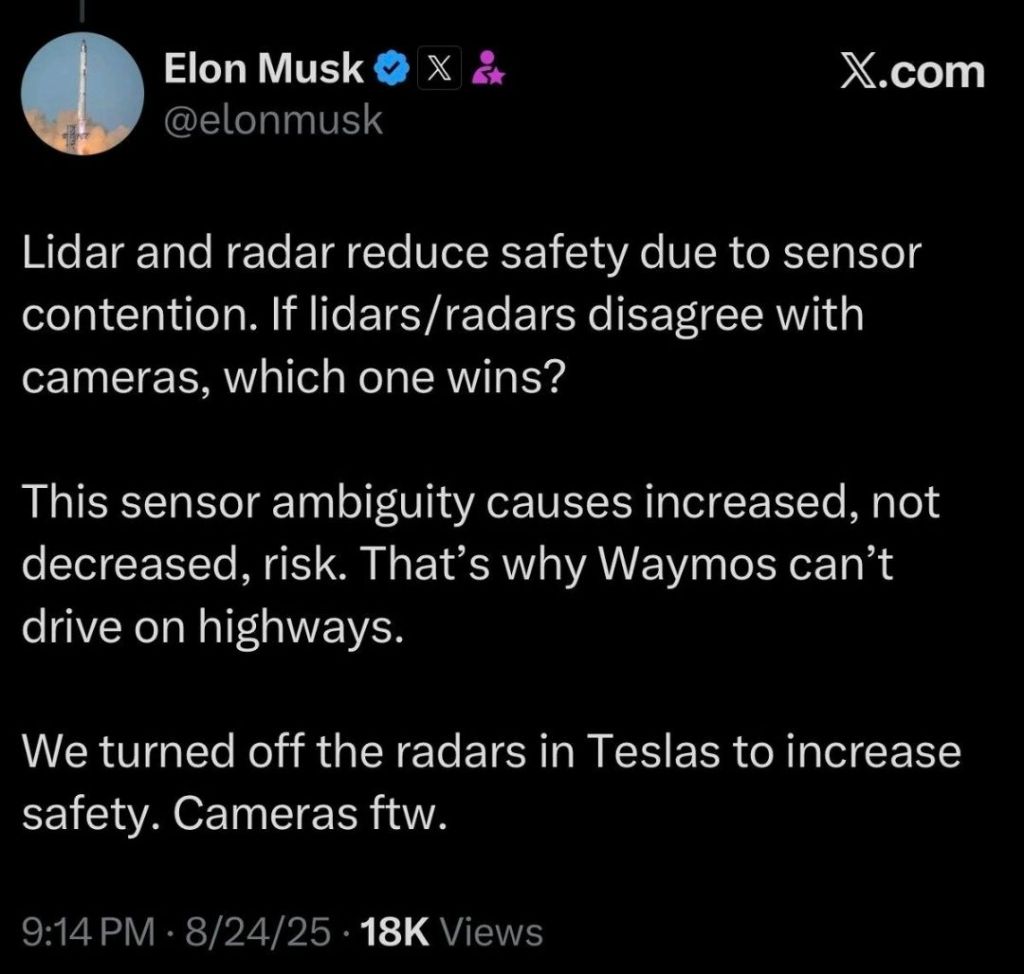

Just in case anybody still needed proof that E.M. has completely lost his sanity, here he is arguing that having fewer senses makes you a safer driver:

If this weren’t so sad and dangerous, it would be almost as funny as the disagreements he’s constantly getting into with his own AI (you know, the one supposedly built to have truth-seeking as its primary goal?)

Filed under Microblogging, Reviews