I Missed My Calling

Filed under Microblogging

Snow Day!

The kids have school off today, so naturally I’m taking a day off from my blog, too. (It makes sense because I say so!)

Please enjoy this festive winter scene:

I hope your day is a fun one!

Filed under Microblogging, My Life

Riddle Me This, Too

Am I being paranoid, or has AI actually made autocorrect worse?

If we’re going to start stuffing LLMs into every crack and crevice of our lives like cream into cheap profiteroles, could we at least include a way to give it feedback? Because I would dearly like to have some way of explaining to my phone that no, I did not and will never wish to discuss “the copy of her hair,” and starting a sentence with “I’m fact, …” makes me both look and feel like a maniac.

Filed under Microblogging

Mal-Come Again, AGAIN?

I was careful to enunciate extra-clearly this time. I guess they did leave out the ‘h,’ so… Partial success?

Filed under Microblogging

Banana Transmutation, Brownie Batter, and the Second Law of Thermodynamics

Content note: profanity, physics

This is my second attempt to gesture flailingly at an epiphany I had a little while ago. It won’t be the last, I think.

Continue readingFiled under Essays

Gratitude

Keeping a daily gratitude journal is one of the cheapest, easiest, and most well-documented ways of improving your overall happiness and mental well-being. (Like most people who know this fact, I don’t keep a daily gratitude journal.)

I don’t keep a daily gratitude journal, but when I was young my family did keep a yearly gratitude journal: every Thanksgiving, we’d take some time together to volunteer things we were grateful for and write them down. Sometimes they were things specific to the past year, sometimes they were more perennial. Sometimes they were serious, conventional things–friends, family, health, and so on–other times they were small, lighthearted, or even silly. (I recall one year saying I was thankful for “God making the Big Bang that created the universe that created the Milky Way that created Earth that created humans so they could invent the Super Nintendo so I could play videogames.” I think I was about nine?) As holiday traditions go it was pretty subdued, but it was still one of my favorites. Even just reading through what we’d written in past years never failed to put a smile on my face.

I don’t keep a daily gratitude journal, but I do have a blog! So this year, I’ve decided to revise an old tradition and share with you some of the things I’m thankful for–large and small, silly and serious. In no particular order:

- The little foldable keyboard I’m using right now to type this post on my phone.

- My close family–those I’ve chosen through friendship and marriage, as well as those I’ve been gifted by chance.

- In particular, my father. I’m writing a song for you, dad. I can’t wait for it to be finished so you can hear it.

- Having a stable job I don’t hate with a good boss who doesn’t hate me.

- The three best gifts I’ve ever bought for myself: my ErgoDox EZ bespoke mechanical keyboard, my Kensington Expert trackball mouse, and my turquoise Nintendo Switch Lite. (Yes, I’m still in love with videogames.)

- Bandcamp!

- Fulfilling my childhood dream of living in a mobile house (although my house doesn’t have an elevator or big-screen TV like kid me wanted–oh well).

- Finally diagnosing and treating my ADD.

- Getting my heart broken and having no regrets.

- Being converted to toe socks and minimalist shoes (regular sneakers look way more absurd to me than shoes with toes, now).

- My spouse’s sorcerous powers of cookery.

- My favorite (nonfiction) writers: Autumn Christian, Eliezer Yudkowsky, Paul Graham, Tevis Thompson, and Scott Alexander.

- Good porn.1

- Costco!

- My new favorite (nonfiction) writer, Chris Ferdinandi.

- Discovering that Stephanie Myer wrote and published a gender-swapped version of Twilight to prove a point, reading it, and finding it works at least as well as the original. #TeamEdythe

- Restarting my blog!

- Making an effort to get back in touch with some friends I’ve been missing.

- Seeing my writing improve enough to produce something I’m truly proud of (and which didn’t take me years or decades to finish).

And of course, I’m thankful for you, my wonderful readers. It’s hard to overstate how rewarding it’s been having an audience, even a small one. Knowing that someone other than my mom is actually reading and enjoying my writing (love you, mom) has been not only motivational, but also wonderful soul food. As always, I love you, and I hope your week finishes with a special treat and some unexpected good news. Joy and Health to you all!

- You may well ask, “What makes porn good?” or even “How can porn ever be good?” And I may well answer, “I think I have an idea for a future post…” ↩︎

Braver-y

Funny, isn’t it? Every time I used to think of you, I would imagine someone a bit worn-down…a little helpless…someone scared, or in need…someone to rescue, I suppose. Ah, what a joy it was to see again the true you, undaunted and thriving! Reality turned out to be much more interesting than my vapid, self-serving dreams–though I shouldn’t have been surprised; I’d simply forgotten how vibrant you really were. Forgotten your magnetism and fire, forgotten your strength, forgotten the way you’d conquered the mountain we climbed together, insisting that the only help you needed was my company, in spite of the pain you’d been in–all this time, I realized, I’d only been remembering an idea of you, not a person–not something real•

Every happy moment came rushing back, then, undistorted by my past self’s sour grapes and inexperience, and I finally understood how effortless things had been. All you ever had to do to make me happy was share your own happiness–and it was so easy to make you happy! Returning your smiles, holding you, listening to you talk: all of it, easy as breathing. Far too easy, I’d thought, and even back then I knew it was stupid to want a “challenge” instead of you, knew I was being a fool, but the very last shortcoming I would have guessed was a lack of imagination. Even after you were brave enough to give me a second chance, literally spelling it out for me because you were too nervous to confess out loud, I still never suspected that it wasn’t pity I fought through when I gave you that final “no”•

All through the rest of my teenage years and well into my twenties, I didn’t realize how rare it was for love to be that easy–how rare you were. Rare and precious, shining like a jewel, small and fragile-seeming but tough as diamond. For all my ego, for all my intellect, for all that I was older than you in years, I’d been far more of a child–a child and a coward. Exaggerating my petty, harmless terrors; too scared to notice the ones pinning me down; unwilling to stick my neck out for you a single millimeter; excoriating myself for all the wrong faults. All the wrong mistakes•

Reliving any amount of our past joy was more than I should have hoped for (certainly more than I deserved, after the way I’d tossed you aside), but at least I made an effort this time. Fought for it, actually–fought harder than I’d ever fought for anything, fought even though I was afraid. Enough to earn a bit of your friendship, if not your affection. A tiny fraction of what I wanted, and over much too soon, but the few days you did grant me were still beautiful. Rare and precious and shining, like jewels•

“Let’s take a step back,” you once said, but I think what I really need to do is learn how to walk forward. Except…it’s so hard to take that first step, when the direction I most want to go is the one you’ve told me not to face: toward you. Standing where I am now, even the thought of leaving you behind for good–of forgetting my feelings the way you’d forgotten yours–is almost too painful to contemplate (…though now I’m embarrassed for calling it “pain” after you gave the same name to the trials you’ve withstood, trials far worse than this, trials I know I would not have lived through, let alone overcome). Still, I have the courage to at least look forward now, thanks to your example, and when I do I see a path–terrain even more difficult than I imagined, yet a relief, too: the answer to a riddle that’s frustrated me all my life, but now that I know where to look it’s breathtakingly clear; like reading a familiar poem and suddenly seeing, for the first time, a message that had been there all along–hidden in plain sight.

Filed under Poetry

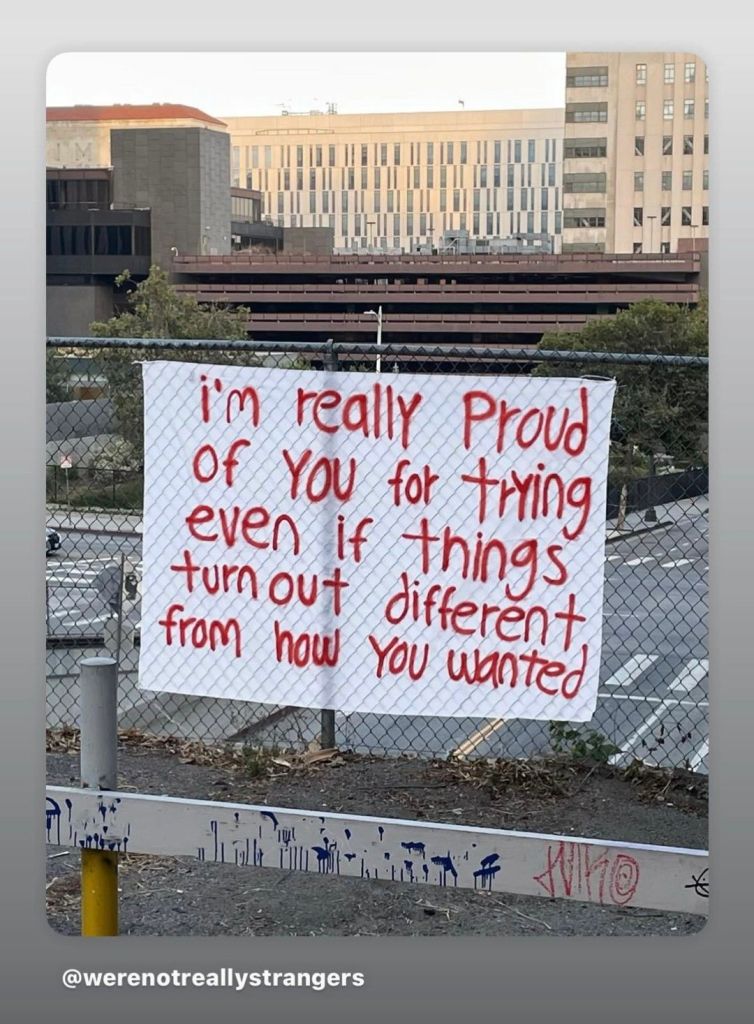

Acronyms, Novel Gags: Surprisingly Tough

Tomorrow I’ll be posting…I guess you could call it the finale of my “Angst Saga.” (I don’t think I’ll be calling it that, but you certainly could.)

A poem about heartbreak might seem like an odd choice for Thanksgiving, but I truly am grateful for the experience. I’m not one of those people who thinks pain is intrinsically valuable–it’s not–but there are some valuable experiences that wouldn’t be the same without it. For example: moments when you’ve taken a big risk that didn’t pay off, but you know it would have been a mistake not to try.

There are important lessons you can only learn from experiences that test you–times when you failed, when you grieved, when you lost, when you made an ass of yourself. If you never risk losing, you’ll never be great; if you never risk grieving, you’ll never fully love; if you never risk looking foolish, you’ll never be wise. I’m thankful I finally took an opportunity to be foolish and heartbroken for the right reasons. It’s a scar I’ll cherish.

On Friday I think I’ll say more about the other things I’m grateful for. Until then, I hope those who celebrate have a wonderful Thanksgiving, and I hope those who don’t have an equally wonderful Thursday. I love you very much.

Joy and health to you all.

Poor, Poor Thing

When I first saw this scene from Harvey (in my case it was a high-school play, not the film), I thought this was the most boring, self-centered, asinine, unimaginative wish anyone could possibly wish for. (It fits the character perfectly.)

ELWOOD. Harvey says that he can look at your clock and stop it and you can go away as long as you like with whomever you like and go as far as you like. And when you come back not one minute will have ticked by.

CHUMLEY. You mean that he actually–? (Looks toward office.)

ELWOOD. Einstein has overcome time and space. Harvey has overcome not only time and space–but any objections.

CHUMLEY. And does he do this for you?

ELWOOD. He is willing to at any time, but so far I’ve never been able to think of any place I’d rather be. I always have a wonderful time just where I am, whomever I’m with. I’m having a fine time right now with you, Doctor.

CHUMLEY. I know where I’d go.

ELWOOD. Where?

CHUMLEY. I’d go to Akron.

ELWOOD. Akron?

CHUMLEY. There’s a cottage camp outside Akron in a grove of maple trees, cool, green, beautiful.

ELWOOD. My favorite tree.

CHUMLEY. I would go there with a pretty young woman, a strange woman, a quiet woman.

ELWOOD. Under a tree?

CHUMLEY. I wouldn’t even want to know her name. I would be–just Mr. Brown.

ELWOOD. Why wouldn’t you want to know her name? You might be acquainted with the same people.

CHUMLEY. I would send out for cold beer. I would talk to her. I would tell her things I have never told anyone–things that are locked in here. (Beats his breast. ELWOOD looks over at his chest with interest.) And then I would send out for more cold beer.

ELWOOD. No whiskey?

CHUMLEY. Beer is better.

ELWOOD. Maybe under a tree. But she might like a highball.

CHUMLEY. I wouldn’t let her talk to me, but as I talked I would want her to reach out a soft white hand and stroke my head and say, “Poor thing! Oh, you poor, poor thing!”

ELWOOD. How long would you like that to go on?

CHUMLEY. Two weeks.

ELWOOD. Wouldn’t that get monotonous? Just Akron, beer, and “poor, poor thing” for two weeks?

CHUMLEY. No. No, it would not. It would be wonderful.

ELWOOD. I can’t help but feel you’re making a mistake in not allowing that woman to talk. If she gets around at all, she may have picked up some very interesting little news items. And I’m sure you’re making a mistake with all that beer and no whiskey. But it’s your two weeks.

Now that I’m older and tireder…well, I still think it’s the most boring, self-centered, asinine, unimaginative wish anyone could possibly wish for. But I’ve gained just a little bit of sympathy for the pompous old jerk. Elwood’s right, of course: two weeks would be way too long, and it would definitely be a mistake not to let the woman talk. But the rest of it? Honestly, that does sound rather nice.

Anybody else think so? We could find a nice grove of maple trees together and take turns!

Cozy

It’s that time of year again–time to bust out my favorite outfit!

Why yes, it is quite warm. Thank you for asking!

What’s your favorite cold-weather accessory?

Filed under Microblogging, Selfies